Some of the material in these articles will be familiar to readers, but my purpose here is to consolidate many thoughts, some old, some new, in one piece. My hope is to inform discussion about transit’s recovery in Toronto and in particular to provide context for the inevitable political debate about what we should attempt, and the managerial issues of knowing whether we have succeeded.

Updated Aug. 30/22 at 1:25pm: Sundry typos and grammatical faux pas corrected. No substantive change to the text.

Since early 2020, the TTC and transit systems everywhere have wrestled with the ridership and revenue losses of the pandemic era. The goal of both management and politicians has been to just “keep the lights on” and provide some level of transit service. Toronto, with the aid of Ontario and Canadian governments, has worked particularly hard to continue an attractive service, at least on paper.

Service quality is a real bugbear for me, and the widening gap between the advertised service and what is actually provided should be a major concern. Next year, 2023, Toronto will likely see the end of special Covid-related subsidies, and a growth in demand back to pre-pandemic levels, although the timing of these events could prove challenging. Meanwhile, City Council “net zero” emission plans call for a major shift of travel onto transit. This will not happen with a business as usual approach to transit.

The focus must shift from muddling through the pandemic to actively improving the transit system, and to doing that with more than a few subway lines whose first riders are almost a decade away.

Key to running more and better transit is a solid understanding of how the system performs together with a planning rationale for growth. This brings me to two documents: the TTC’s Service Standards and the monthly statistics included in the CEO’s Report.

In this first of two articles, I will review the Service Standards and discuss some general principles about reporting system behaviour. In the second, I will turn to the CEO’s Report.

There are two essential problems:

- The actual machinery of the Service Standards is not well understood, and the current document was endorsed by a previous TTC Board almost without debate. Superficially, the standards appear to call for good service, but in practice they hide as much as they show in reporting on quality. The Board did ask for follow-up information on improving standards (more service, less crowding), but management never delivered this feedback.

- To the degree that management reports system performance, this is done at a summary level where the day-to-day reality of transit service and rider experience are buried in averages that give no indication of how often, when or where the standards are not achieved.

General Principles

A single day’s operation produces a vast amount of data from vehicle tracking, passenger counting and fare systems. Readers of my operational analyses of routes will know that even on a consolidated basis, there is a lot to look at. Not even the most dedicated transit analyst (paid or otherwise) has the time to review all of this in detail.

However, the behaviour of these data must be understood at the detailed level if anything meaningful is to come from them. The problem, then, lies in consolidation and presentation of important factors day by day and over longer periods to identify what works and where problems need to be addressed.

Moving the Goalposts

A major problem with achieving improvement is to define just what that looks like, and to ensure that metrics are not constructed to make business as usual look acceptable. This has been a major problem with TTC metrics and standards for years.

Many goals and standards were codified that were simply “what we’ve always done”. Others were changed along the way, but without historical context or a sense of whether we could aim higher. Some metrics hide the peaks and valleys giving us no sense of the range of actual experience or of what might be achieved consistently with more effort, or where problems lurk that deserve more attention.

At the political level, the TTC’s Board simply rubber stamps whatever standards or metrics management puts in front of them and does not explore the “what ifs” behind the numbers. Achieving a high value against a target is meaningless if the target’s inherent value is not understood. Handing out gold stars because the charts look good without knowing where problems lurk, or how much better the results and rider experience could be, is not a mark of good governance or oversight.

Budgets

A major factor in any transit operation is the budget available to operate service and maintain the system. I have been reading TTC budgets for almost 50 years, and a dreaded phrase in any proposal is “subject to budget availability”. There is a similar problem at the City. The TTC Board or Council may want or even direct that something happen, but if it is “subject to budget” or only “for consideration in a future budget”, we can wait a long time for results. Some requests just fall off of the table after elections when a new board takes office.

However, the greater problem is that there is no report showing the services, the quality that is foregone because of budget limitations. Rarely is there a “what if” presentation included with budgets to show what improvements might be made and how much they will cost. This has hamstrung service improvements and fare changes for decades. “It costs too much” is a common refrain, but rarely is a costed option on the table for debate.

The 2003 Ridership Growth Strategy tried to break this pattern by asking what might be done to build ridership, and how much it would cost. The political debate then turned to how we might pay for changes. Oddly enough, some changes proved to have modest costs and considerable benefit. The two-hour transfer is a recent example. An essential part of planning is not to say “we can’t afford it”, but rather to first explore options, benefits and “how might we afford” new policies. Oddly enough, we never hear about affordability when in comes to vanity rapid transit projects.

Improving the TTC and attracting more riders will require a lot of hard decisions about how much we, collectively, are prepared to spend on transit. The same applies at a regional level with fare integration and service improvements. Key, however, is that the options must be on the table for public debate.

Averages vs Details

Almost all TTC data has, historically, been reported at an average level showing a best a day’s operation or a longer period. This has the effect of making service look much better than it might really be. A simple example is the average headway (interval between vehicles) on a route. Buses might be scheduled to appear every 5 minutes, but actually show up in pairs every 10, a pattern familiar to all riders. Reporting only the average gives the impression of better service than is actually provided.

Even if the buses appear regularly, they might not operate to their advertised destinations. The average headway will not reveal the useful headway of vehicles going places riders actually want to travel.

Similarly loads on transit vehicles will be affected both by the regularity of service and by variations in sources of demand (e.g. shift changes, school dismissal times). The average load on a route can be very different from the load on individual vehicles. In a worst case situation, some vehicles can run nearly empty while others have standees. On average, the route is not overloaded, but most riders are on the crowded bus and see that as the “typical” condition.

Exception reporting

Any system to process and track all of this data, whether in real time for route management or after the fact for review, needs to flag the exceptions. How often, when and where is service running in bunches? Are individual vehicles overloaded even though on average the route’s capacity appears to be adequate?

If vehicles are missing, why did this happen, and for how long? Was it equipment breakdown? A missing driver? An emergency diversion of service to another route? Was service adjusted to allow for the missing vehicle by spacing buses, or was the gap left as is in the name of running the rest of the service “on time”?

Recent analyses on this site suggest that there will be a lot of “exceptions” if this type of reporting were enabled because the overall service reliability is poor on many routes. That in itself would be news, and not the sunny, bright story the TTC loves to tell. The challenge is not to hide this, or to downplay it as the result of two crisis years, but to address underlying problems and drive down those exceptions.

Service Standards

Origins

The idea of having service standards began in the days of Mayor John Sewell and work within the City of Toronto’s Planning Department. It was a time when the system was still growing, and there was competition for transit resources. Were they being allocated fairly, or to squeaky wheels, or were routes running just because they were always there?

Over the years there have been a few iterations of this scheme, and the most recent was approved by the TTC Board in May 2017. See Update to TTC Service Standards for the background and a presentation deck. My own review at the time is in TTC Service Standards Update. This is a long article and I will not repeat all of its arguments here. In the context of the CEO’s report, the important issues are these:

- The standards for loading and crowding are reported on an average basis and give no indication of problem times, locations and routes. It is assumed that management reacts to such problems, but there is no list of deserving improvements that are not operated due to limits on resources (financial, fleet or operating staff). Such a list did appear from time to time in the CEO’s Report (or the Chief General Manager’s Report as it was once called), but not regularly. It can be a tad embarrassing when the list gets long.

- The on time performance standard is reported only for terminals, and with standards so lax much service riders would think of as unreliable is regarded as acceptable. There is no reporting on service reliability along the length of routes, only at their origin.

- Although there is a missed trip standard, there is no report on this, nor is there any reporting of trip cancellations due to various constraints.

The goal of the standards is consolidated in the following paragraph.

From the customer’s perspective, the transit network should provide convenient and reliable service when and where they need to go, with good customer communication and service. From a system-wide transit operations perspective, the transit network must be manageable, operable, and sustainable – all within the constraints of a fixed operating budget.

This contains two conflicting goals: “good customer communication and service” is often elbowed aside by “constraints of a fixed operating budget”.

From a policy setting viewpoint, there is a major problem that the TTC Board does not understand the latitude their approved standards actually give management, and the degree to which unreliable and inadequate service can cited as meeting “Board approved standards”. This leaves a gaping hole between reported service quality and actual rider experience.

Crowding

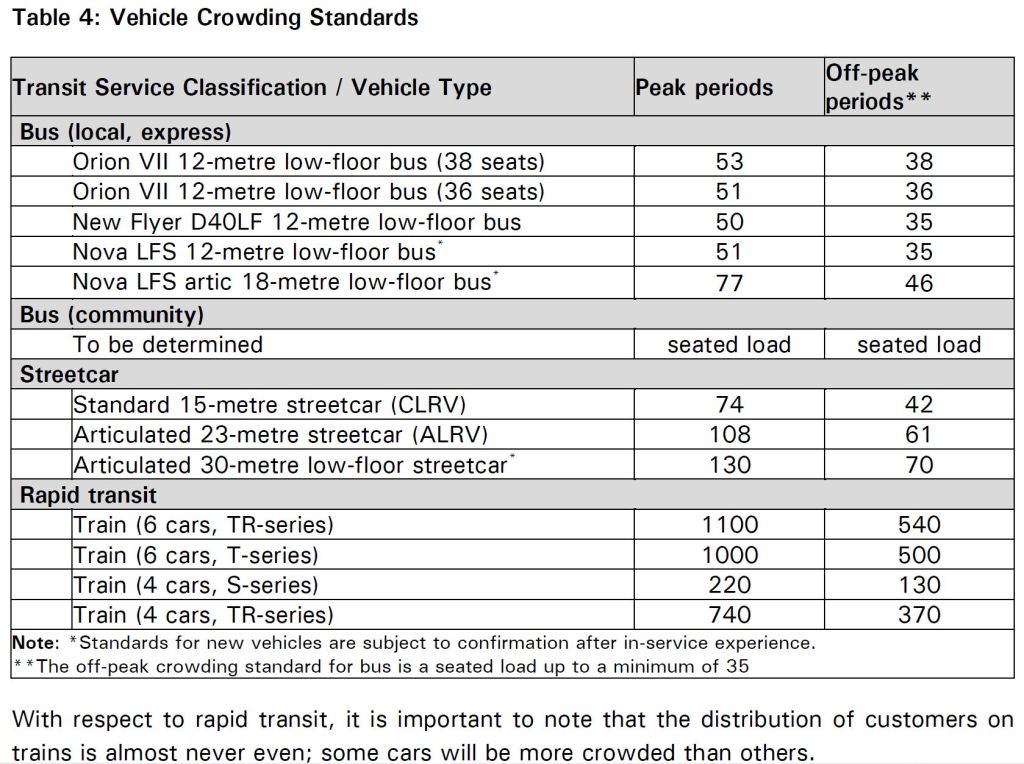

The standards for vehicle crowding are based on a service design load, something that can be sustained over a period of time, not on crush loads. Within an hour, some buses may be packed to the roof and others half empty. For the purpose of the standards, it is the average that counts. Even without erratic service, this addresses a “peak within peak” problem where there could be a surge of traffic that lasts only 30 minutes on only part of a route.

This table has not been updated since the standards were published. It refers to some vehicle types that no longer operate and does not include the recently acquired eBuses.

During the pandemic, TTC set its standards lower than those shown here pending ridership recovery. That recovery is still in progress, but has reached a point where there is no longer a Covid adjustment reducing the target.

In normal times, the TTC’s standard for reviewing service levels is that if the crowding standard is exceeded 95% of the time over 6 months, service would be improved. If on board loads fall under 80% of the target, service would be reviewed for trimming subject to policies on minimum headways and transit access.

On Time Departure

Running service “on time” is something of a mantra for transit systems including the TTC. The problem, however, is that most Toronto services are scheduled at a frequency where “on time” matters a lot less than “reliability”. Unfortunately, the standard aims at being “on time”, and with considerable leeway. Reliability is assumed to follow automatically.

It does not.

The basic rule is that vehicles should depart from their terminals within a range from 1 minute early to 5 minutes late. This applies to all routes regardless of scheduled headway. An obvious problem for frequent services is that the “on time window” could be wider than the scheduled headway and bunches of two (or even three) vehicles would meet the standard.

There is a separate headway performance standard that allows a window of up to six minutes deviation from the schedule 60% of the time. This is so lax as to be meaningless particularly on an all-day basis. There are no headway-based published reports except for subway service (see below).

The On Time results are averaged across all routes on an all day basis.

(There is also an On Time Arrival standard using the same +1/-5 range, but the target is that it would be achieved only 60% of the time. Given the TTC’s love for padding schedules to ensure vehicles do not run late, vehicles can get to their terminals well before their time.)

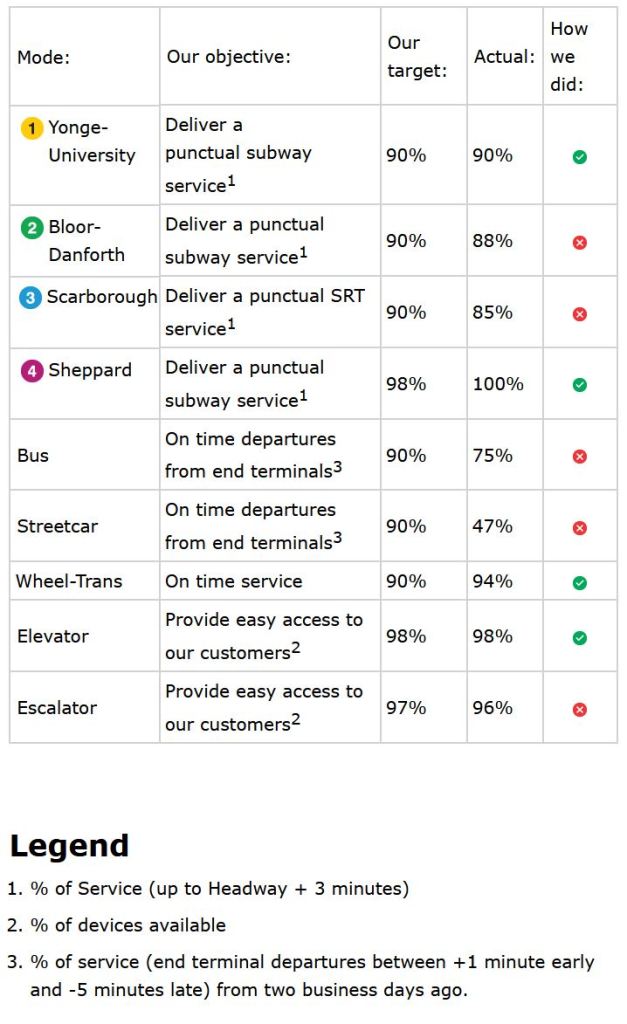

The TTC publishes a Customer Service Report for each weekday. Here is the report for Monday August 22 (a week ago as I write this). Note that only on time departure stats are cited for surface modes.

From this report, we see that they only achieved “on time” performance for 75% of bus trips, but we do not know which routes or when this happened. Some routes may be a model of perfect service while others could be a complete mess. Streetcar services are particularly vulnerable to disruption from many events including construction and traffic congestion. Reliable headways would be a better metric of service quality.

Missed Trips and Short Turns

Missed trips are defined as trips leaving more than 20 minutes late. This does not address trips that simply do not operate at all because a bus or streetcar is missing, broke down or was short turned.

According to the standards, the goal is to minimize short turns, but this can be counterproductive:

- If bunches of vehicles form for whatever reason, the fastest way to sort them out is to short turn some into the gap they created. If this is not done, the gap gets wider and wider and the service is terrible. This behaviour is becoming common on some bus routes where avoidance of short turns seems to take precedence over provision of reliable service.

- On streetcar routes, the TTC claims to have all but eliminated short turns even though vehicle tracking data show that at least 10% of trips are short turned on most days, sometimes many more. Not reporting them does not make them vanish.

With the staffing problems of the Covid era, missing operators is another regular problem. In several recent service analyses, I have shown that buses can be missing for hours at a time. Often there is no compensating adjustment to service.

Pre-pandemic, there was a period when service was limited by the number of available buses, but this has not been the case for a few years due to (a) lower peak fleet requirements with reduced schedules and (b) a higher ratio of spare buses available for use as extras, change-offs and service improvements.

There is no report on the number of missed trips whatever might have caused them.

Rapid Transit Service

Two standards apply to rapid transit lines:

- A headway deviation of up to 100% of the scheduled value is allowed. This means that for trains scheduled every 5 minutes, there can be a 10 minute gap.

- The total capacity operated (counted as trains) should be at least 90% of the scheduled value. Thus if 20 trains per hour are scheduled (a 3 minute headway), then at least 18 should be operated.

The first target is measured differently in the Customer Service Report above with a +3 minute variation allowed even though scheduled service is often considerably less frequent, especially in Covid times. Service capacity is reported in the CEO’s Report, but not in the Customer Service Report.

Monitoring the System

The Service Standards include the following statements

If the above performance standards are not met on a regular basis for a specific route, TTC will consider a range of options including, adjusting the published schedule, adjusting route timing, providing additional training for drivers or modifying or adding transit priority measures. [p. 17]

…

Each operating division is constantly measuring and monitoring service reliability and operations. The results are based on the real-life, day-to-day observations of operating staff and the input they receive from customers and are used to improve TTC service. [p. 30]

The quality of service actually operating on the street suggests that these activities are not rigourously pursued. More to the point, we have no way of knowing whether the TTC as an organization is aware of the scope of problems it faces because there is no public report reflecting service quality at a route-by-route level. Problems are not flagged and tracked. Rider complaints are met with boilerplate nostrums about traffic congestion and surge loads when a simple reference to online tracking data can show these statements to be false.

Productivity and Evaluation of Changes

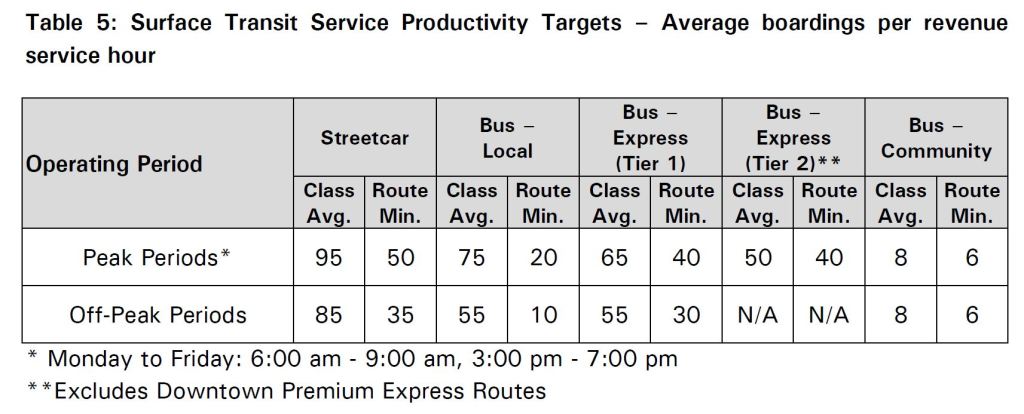

The Standards include productivity targets expressed as boardings per revenue vehicle hour. These are summarized in the table below. These should not be confused with crowding standards that determine when an average vehicle is “full” for service planning purposes. The capacity of a vehicle is recycled as passengers board and leave over the course of a trip.

The primary use of these values is to determine whether a route should be reviewed for service reduction or elimination. To put it another way, if a local bus route can achieve at least 10 boardings per hour, it should be safe from elimination. This was an important part of the standards during the Rob Ford era of service cuts as a mechanism to identify the poorest-of-the-poor within the network.

Although this appears to be a “fair” way of trimming service, there can be distortions thanks to route structure. A short route, by definition, will have a lot of turnover and therefore many boardings per hour. A good example would be a bus that makes a round trip in half an hour or less. Conversely, a long route will have more trips that consume capacity but do not contribute boardings at the same rate even though the capacity in seat-hours consumed is the same. (One rider could travel 10 km, or five could travel 2km each.)

This target has not been invoked during the Covid era because standards were relaxed, but we could see its return should budget cuts force a review of service levels.

Service changes, particularly those involving route restructuring, look at how changes affect rider convenience. This recognizes that various aspects of a trip are perceived differently by riders. Actual travel time counts as “1.0” while waiting and walking time count as 1.5 and 2.0 respectively. Transfers get a particularly big penalty of 10.0.

This means that if a proposed change increases walking or waiting time, the change counts more against the proposal than a comparable saving in time riding a vehicle. Similarly, a new transfer imposed on a trip is penalized. As with other standards, we have not seen much exercise of these measures during the pandemic, and two major changes in the works for 2023 (Eglinton Crosstown and Finch LRT openings) focus on adjusting routes to connect with the new services.

The TTC evaluates the economic effect of changes, including fare proposals, by looking at their effect on ridership. With respect to fares, this methodology makes sense. There is no point in raising fares if the result is lost ridership and a corresponding drop in revenue.

Within the Standards report, there is a calculation based on 2015 results which concludes that a 10% fare increase would generate $86 million in new revenue, but at the cost of losing 10.7 million passengers. In other words, for every $100 in new revenue, 12 passengers would be lost.

This calculation is no longer valid for various reasons:

- One “fare” now buys more travel than in 2015 before the two-hour transfer was introduced in 2018. Trips that are now “chained” for one fare previously were substantially more expensive.

- In recent years, fares have been frozen or have not risen as fast as costs. The perceived effect of a fare increase following a long period of little or no change will be greater than it might be otherwise, but whether this would drive away riders given the cost of alternatives is another matter.

An important issue is that thanks to inflation and other economic forces, that value of 12 passengers lost for each $100 in new revenue is only valid at the time of the calculation and with the underlying assumptions about rider behaviour.

The TTC uses the same metric to evaluate service changes on the premise that spending more on service should be offset by greater ridership. However the goal of 12 new riders per $100 spent appears to have been frozen in time when the Service Standards were written. It is clear that $100 will buy less and less additional service over time thanks to inflation, and so the change needed to get those 12 new riders will be more expensive in 2022 than it was in 2015.

The TTC treats this number as “dimensionless” when in fact it will change year-over-year, and would be different if the comparative fare increase were greater or smaller than 10%.

It would be valid to compare multiple service change proposals for their effect on ridership versus their cost, but an arbitrary number of new riders per dollar is not a valid metric. Indeed one could easily see a day when $100 in new subsidy would have so little effect that it produced no new ridership at all.

Again, this is an issue that will come up when the TTC gets back into the business of evaluating changes to meet budget-defined targets.

Route “Profitability”

The subject often arises of the economic efficiency of transit service, and this inevitably comes down to an attempt at measuring whether a route is “pulling its weight”. That sort of thing appeals to politicians who look on complex municipal services as if they were running a small corner store and every dime came out of their pockets. The problem is that, unlike a corner store, the costs and revenues for a transit network cannot be easily isolated. Moreover, the network is greater than its individual parts.

To date, the TTC has been immune to the “Business /Benefits Case Analysis” mania that afflicts Metrolinx. Their analysis places a huge weight on the imputed value of future reductions in travel time to offset the cost of new infrastructure and service. That notional saving does not actually pay off the transit investment but simply measures the supposed benefit if you agree with the underlying value assumptions. This dubious analysis depends on assumed future conditions and soft “values”. It is hopeless in evaluation of relatively small scale changes such as providing more and better service on the Dufferin bus. Let us hope TTC continues that most basic metric – “is anybody riding”.

Fare revenue is impossible to allocate because fares are not paid on a vehicle-by-vehicle, trip link-by-link basis. Attempts to do this in the past have produced wild distortions when the average fare per kilometer or per boarding were used to allocate revenue. If the average trip is 10km (it is actually slightly less) and revenue is allocated per kilometer, then someone who travels 20km “contributes” twice the revenue on paper than they actually paid. Similarly a short trip contributes less on paper than the fare paid. This consistently overstates revenue for long trips and understates it for short ones. A similar problem occurs if one uses boardings rather than kilometers because some trips involve more boardings than the system average.

A further problem arises because nobody pays the “average” fare. That concept dates from the era of tickets and tokens, strict transfer rules, few concession fares, and no passes, let alone regional co-fares.

This can compound with the length of routes to distort “profitability”. A short route necessarily cannot carry a rider a long distance, and so the cost of carrying any rider there will be low. The result is that any table of cost recovery inevitably has short routes at the top of the list. (Routes like 22 Coxwell always did spectacularly well, but their “profitability” did not result in any improved service.)

In another variation, a long route that has a high turnover of passengers and strong bidirectional demand has many boardings. If revenue is allocated on that basis, the route will do “better” than one where riders take, on average, long journeys, consume more bus capacity per boarding, and seats run empty on the “reverse” trip. This does not invalidate the need for a route, but simply illustrates how difference between routes can produce quite different “financial” results.

The artificiality of a metric can be shown with a simple example: suppose that there is a long route and it is split into two pieces with the level of service and ridership unchanged. All of the riders who travel across the new break in the route now count as two boardings where the day before they counted for only one. This will allocate more revenue to the individual routes making them more “profitable”.

Finally, a fast route has a lower operating cost than a slow one because the latter requires more vehicles and operators to carry the same demand. Costs of routes cannot be directly compared with the idea that the “cheaper” route is somehow more efficient. A more detailed understanding of factors affecting costs and allocated revenue (if that scheme is used) is required, not simply plugging operating and revenue stats into a formula and looking at the sausage that comes out the other end.

It is a fool’s errand to attempt to calculate route profitability, and yet this comes up from time to time. Fortunately, the TTC stopped publishing such numbers a while ago. The real question always should be: are people riding the bus?

Concluding Thoughts

There are more issues than these especially for rapid transit lines with their large fixed infrastructure whose operating cost is independent of ridership, but I will not dwell on the subject here. However, as more rapid transit lines come on stream, Toronto will see jumps in the cost base for transit that must be absorbed somehow.

The question of operating cost calculations and revenue effects inevitably comes up during discussions of system improvements or of trimming service to achieve budgetary aims. If Toronto is to pursue substantial service improvements in a Net Zero plan, or worse, if we are to trim service to fit constrained budgets, we need to understand how the numbers really work in each scenario.

In Part II of this article, I will turn to the CEO’s Report and the manner in which TTC service and operations are presented. Many facets of the system are poorly understood by the Board and by Council, and their usual simplistic directive is to spend less while providing more.

The period since March 2020 has been without precedent in the TTC’s history. The need to clearly understand what we want of our transit system and what that will cost has never been more pressing. Without good analytical material showing transit from a rider’s point of view, we have no hope of assessing options or measuring their success.

Though facts are obviously important (and you provide many that the TTC itself either hides away or has not looked at properly) but one’s ‘evaluation’ of services like the TTC is also based on perceptions and unfortunately the TTC provides many occasions when the ‘customer’ sees things that give little confidence in how things are run or that anyone is ‘connecting the dots’.

I could give many examples, but a visitor might wonder why the platforms of several stations (e.g. St Patrick and Queens Park) have bare walls/ceilings – regulars will know that they have been stripped for several years. (Why?) One might also wonder why so many subway station ceilings lack ’tiles’ or slats (again for many years).

Steve: St. Pat’s and Queen’s Park tiles were taken out for asbestos removal. They will be replaced in due course. The slatted ceilings in stations have a generic problem that, where they are over the tracks, they require an overnight crew to remove or replace using a work car. A lot of the “replace” activity does not get done, and the TTC removed these slats permanently replacing them with black paint. At some locations, installation of new conduit runs (for example, for WiFi service) required removal of wall/ceiling coverings. Putting them back is not a high priority for the TTC.

Then there are the old signs they never remove – this is actually worse with their new, admittedly more professional looking, plastic-laminated bus stop signs; the old paper signs eventually disintegrated, the new plastic ones do not but nobody from the TTC ever seems to remove them! Then, when a bus route changes they take months to change the Stop signage – many stops on the 172 route still say they are served by the 121 (which has not happened since the 172 route was created in spring 2022). It’s good to note the routes served on bus stops, but then they need to adjust them! Other stops are not removed when a route changes – there is still a southbound stop on Princess @ The Esplanade for the 65 which has not run there for well over a year. (After several complaints they did put up laminated signage saying “Stop Not in Service” – it would have been easier and clearer to simply remove the Stop signage! Regulars may shrug and accept this, it certainly puzzles tourists.

Clearly the TTC has silos and nobody seems to be responsible for ensuring that changes made by Silo A are reflected in the responsibilities of Silos B and C!

LikeLike

Thanks for this post Steve. Much to agree with, with a nod.

A couple of added thoughts.

1) Crowding standards don’t seem to account for the impact of riders with mobility aids and/or strollers. A fully seated load, on a 38 seat bus, when one bench is put up is 36, if two are put up, its 34. This issue of capacity calculation is even more important in rush hour with a crowded vehicle. As a passenger with a mobility aid, require the ramp to exit, will necessarily require many other passengers to disembark for them to have room to get to/from their seating and to/from the door.

This should not be understood as any critique of said passengers, its not; but rather of how the TTC does or does not account for their impact on operations.

2a) I fail to understand why ‘Vision’ is not more fully utilized to spot real-time problems. I appreciate that we may not want the system to flag every bunched/gapped set of vehicles. But surely a high bar of some kind can be set where a gap of more than 200% of scheduled headway or more than 10 minutes greater than headway is automatically flagged to relevant staff.

2b) When I do encounter supervisors, I still see them with old school notepads…….how are they supposed to manage a route without a tablet showing them real-time vehicle position?

3) I think your idea of ‘exception reporting’ is critical (as in important).

I think I’ll leave it there for the moment!

Steve: Another example of a disruption that can occur is from someone on a streetcar needing the mobility ramp to be deployed for boarding or leaving the car. It’s not their fault for causing a delay, but it adds a few minutes to a trip if only because a streetcar might miss a green light it would otherwise catch. At least the space inside the car is better suited than the space on buses where a mobility device, baby carriage or shopping buggy can completely plug access.

As to what supervisors have, yes the fact that I can see more on my phone than they can with a pad and paper is troubling. Originally the TTC had some special purpose computers built for supervisors, but they were heavy and unwieldy. There is also the question of how much useful info they conveyed.

LikeLike

Typo alert. “unweildy” is spelled “unwieldy.”

Steve: Fixed. Thanks!

LikeLike

‘Carservative’ transit will be lacking; and that’s kind of why we have such albatrosses of expansion projects – to help further burden the system, though we do need a) good supports/maintenance, and b) smart expansion with some political will. Always bear in mind that the costs for cars are well hidden in multiple budgets, and suburban politicians are happy with that, because user pay is really only for transit, and other select groups. Also, the cars are profound contributors to climate change; this happened to be in memory from 15 years ago, but it remains relevant as part of ‘caronic’ denial…

hamish wilson

Carontop,

LikeLike

I’m glad to see you writing about this in such detail. I’ve commented before on the bewildering state of reliability across the system. As James has said in the comments here. It is almost criminal that the TTC supervisors are standing around with a clipboard and pen in 2022. After the commission has spent millions on a sophisticated and fully functional system like Vision. It actually makes me mad to see this, because it looks so stupid, if you did not know any better you could be sure that they are just props for show. To make it look like they are doing something with their time. What relevant information could a static paper provide about real-time route conditions.

My biggest question is this; clearly the TTC has a problem, its getting worse and worse. The organisation has all the tools it needs already in place to address this problem. But it has a completely bloated and ineffective management and supervisory staff who seemingly don’t do any supervising. With the shortcomings being so blatantly obvious, even to a regular rider. How do we effect change? How can we get this gross incompetence to be taken seriously by the Board and for the Board to take corrective action against this utterly useless CEO and his team of lazy managers.

I’ve often wondered, If the Board and the TTC management were forced to read this blog and take your recommendations to heart, what change might we see in service? I would bet every cent to my name that the change would be transformative to say the least. So how do we do it, how do we shove this information and shove it in the powers that be faces and go “See this, look you over paid morons. This is what’s wrong. now fix it”

Maybe I’m being a bit harsh here. But fed up is more like it. We should not have to put up with this. These people are slowly dragging the reputation of the TTC down the drain. And leaving behind cynicism over the leadership of the TTC and resentment towards the system and its operators who bare the brunt of the public’s anger over the poor service. Something that the management at the TTC seems to care very little about.

Steve: I can assure you that this blog is well-read within the TTC. However, real change is hard to come by given reluctance at the top.

LikeLiked by 1 person

Thank you for this blog entry! This summarizes my exact experience as a rider. The system is quickly on the decline. I know that this is a very qualitative observation but ever since Byford departed it’s been quite apparent of the state of the system. Regarding route management, even the subway seems to be suffering from this especially during week day evenings after 6pm and on weekends in general. Subway frequency seems to be sliding into the 6 even 10 minutes and more nowadays. Dare I say almost comparable to how MBTA runs their system.

There’s a few things I would like to point out:

-How is it that the TTC communications can’t seem to quickly get their delay messages across the PA instantly? There always seems to be a delay or even no notification. Also, once a delay is cleared why are the on platform displays and PA system still announcing the delay? It’s as if they don’t even care to delete the auto message.

-Why are the TTC’s own next bus arrival displays so off? One would think that they would be identical to say someone using their phone or google transit to predict the next bus. But no, the TTC’s own system is not accurate at all.

Steve: I agree that the mechanics of making and clearing messages needs work. Something I see from time to time is announcements of an event being resolved hours after it actually happened. Recently, I got a few eAlerts for the 503 Kingston Road car at 9pm, well after all of the service is bedded down for the night.

As for predictions being off, I too have noticed this, but it depends on where you see it. For example, the displays at shelters and in subway stations runs off a TTC system called NVAS (Next Vehicle Arrival System) and there are times when the predictions feed is seriously delayed getting from Vision to NVAS. What exactly is going on under the covers I don’t know, but the result is “predictions” that are hopelessly inppropriate because vehicles have come and gone. However, an app like Rocketman might show different info, and this implies that its feed is not subject to the same delay, and it “sees” events that are more or less current. This is an example of the lack of attention to detail at the TTC, and a sense that too few people with any real influence actually ride the system and notice these problems.

And I won’t say anything about eAlerts advising of diversions that are physically impossible.

LikeLiked by 2 people

Like your line about e-alerts that are physically impossible… a few of them lately (eastbound on Keele, Northbound on Sheppard, and the best had to be Northbound on King Street… does no one actually read these before sending them out?

I actually had to phone customer service about their website advising that a 2 night shutdown on line 2 between College and St. Clair was scheduled… when I pointed it out they said so??? I replied line 2 is Bloor Danforth NOT YUS – I was accused of nitpicking…

Steve: But, of course, if the notice is linked in the site to Line 2 rather than Line 1, it would never appear on the list of notices if someone was only looking at Line 1.

LikeLike

They short turned multiple consecutive King cars tonight to turn what looked like a relatively evenly spaced service into a big gap. I’d guess this was to get things back “on schedule” due to earlier delays. They always find new ways to impress.

LikeLiked by 1 person

Thank you, Steve, for another excellent article.

My big takeaway is that TTC management has learned well the lessons of the book, “How to Lie With Statistics.”

One notoriously effective method of lying with statistics is to abuse the concept of the average. For example, Steve wrote:

In terms of actual lived experience, averages rarely count for much. There is a joke about this: Bill Gates walks into a bar. On the average, everyone in that bar is now a multi-millionaire.

This form of deception is so key to TTC bamboozlement that is is very important to understand how it works. For example, consider a bus route that is a feeder line to a subway station. The bus starts out at the end of its line either empty or with one or two passengers who got on at the very last bus stop at the end of the line. As the bus picks up more and more passengers as it approaches the station, it becomes overcrowded with a sardine-can, Covid super-spreader experience for the packed in passengers. However, because it was almost empty at the outer parts of the line, ON AVERAGE that bus may have been only half full. This allows the TTC to dismiss the lived reality of the overcrowded experience of actual passengers.

A far better metric would be a reporting system that reports whenever an actual bus exceeds the TTC loading standard. Such a reporting system would accurately report the actual lived experience of TTC passengers, but would be very embarrassing for the TTC.

Steve: I am working on Part 2 of this article to look at the existing CEO’s Report and how the information it presents should be changed to reveal what is actually happening.

LikeLike